Introduction

On the date I am writing this article, exactly 5 days ago, Oracle, a technology giant, laid off 30,000 employees with a simple email sent at 6 a.m. I found myself reflecting on this for a few days, especially considering that the total number of layoffs in 2026 has already reached 80,000 employees worldwide, just counting big tech companies, not including others, in the first three months of the year.

I struggled with myself trying to rationalize this as just another cycle shift. After all, the work model has gone through several transformations throughout history and, in general, new roles emerge, markets reorganize, and the economy finds a new balance. And no, this is not an apocalyptic article about robots replacing humans.

But, when observing the speed and nature of these changes, a concern started to make more sense to me, especially from the perspective of a Product Manager: Maybe humanity is not just going through another transformation.

Maybe we are facing a moment where the very model of value based on human labor needs a pivot.

The current model

So far, the current model is relatively clear: humans study, develop skills, and, by differentiating themselves, increase their value in the market. This value can translate into income, recognition, or impact.

Historically, this has always been sustained by scarcity: few had access to knowledge, few were able to execute with quality, and few mastered advanced cognitive skills.

We can look at classic examples such as Mozart and Beethoven in music, Isaac Newton in science, or even contemporary figures like Messi, Steve Jobs, and Bill Gates. They all had something in common: a rare ability to generate value, whether cognitive, physical, or a combination of both.

In general, humans have always stood out either through their cognitive abilities, physical abilities, or the ability to apply both to generate impact. This model has always encouraged individuality, the pursuit of excellence, and differentiation.

And so far, it has worked.

The disruption of the current model

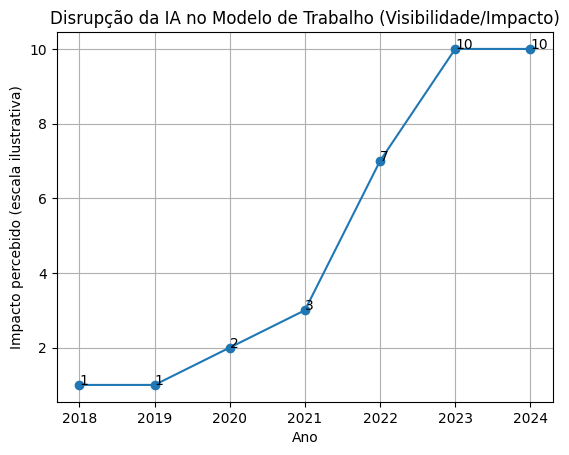

The disruption caused by artificial intelligence began to become visible to the general public around 2023, even though its impacts had been developing long before that.

Since then, the way we work, learn, research, respond, and even communicate has started to change significantly.

One of the most emblematic statements of this period was made by Elon Musk in 2025, when he stated that virtually all content available on the internet had already been consumed for training language models. This sparked intense discussions about intellectual property, data usage, and the role of human-generated content in this new landscape.

But beyond legal and ethical discussions, there is a structural change happening.

For the first time in history, knowledge is not just accessible.

It is operational.

Language models do not just store information. They can interpret, synthesize, and apply knowledge on demand. And that alone would already be enough to generate a significant impact on intellectual work.

But the change does not stop there.

It is also advancing into what we have always considered exclusively human: the ability to create and execute. Today, artificial intelligence systems are already capable of composing music, generating paintings, writing scripts, editing videos, creating full advertising campaigns, and even assisting or driving vehicles.

In other words, we are not just automating operational or analytical tasks. We are advancing over cognition, creativity, and execution at the same time.

Historically, these three dimensions have sustained the value of human work. And that is exactly what makes this disruption different from previous ones.

Cognitive leveling

If before the human advantage was the ability to acquire and apply knowledge, we are now entering a scenario where this ability can be amplified, or even partially replaced, by artificial intelligence systems.

This creates an important effect: cognitive leveling.

Tasks that once required years of study, practice, and experience can now be executed with the help of AI in a fraction of seconds. This does not mean specialists cease to exist, but it does mean that the gap between a specialist and an intermediate shrinks drastically.

And when that happens, the marginal value of cognitive work tends to decline. From a product perspective, we are seeing something classic:

- massive increase in supply

- reduction in production cost

- resulting commoditization

If anyone can produce text, code, analysis, music, images, or even strategies with the support of AI, then differentiation based purely on cognitive ability ceases to be a differentiator.

The impact on the model of humanity

This leads us to an even more uncomfortable question:

If not only cognitive work, but also creation and part of execution are no longer scarce, what sustains the model of humanity we have built so far? A large part of our society has been structured around a simple concept:

Humans generate value through their work. And this value is directly tied to their ability to think better, create better, or execute better than others.

But what happens when those capabilities are no longer differentiators? When anyone can access a cognitive layer on demand. When creation no longer requires skill. When execution becomes automated. We are not just talking about impact on the job market. We are talking about a structural misalignment.

The current model of humanity assumes scarcity of capability, but what we are building now is availability.

And when a model based on scarcity meets an environment of availability, it does not adjust. It breaks. This does not mean humanity has failed, but it may mean that the model we use to organize value, effort, and reward is no longer compatible with the world that is emerging.

And, like any product, when the context changes and the model no longer makes sense, there is only one alternative:

PIVOT.

Conclusion

Maybe we are not facing a job market crisis. Maybe we are facing an incompatibility between the current model of humanity and the world we are building.

For decades, we structured society around a simple principle: humans generate value through their ability to think, create, and execute better than others. But for the first time, these three dimensions are being impacted simultaneously. This is not just about task automation.

We are witnessing the reduction of the production cost of anything that can be transformed into information, whether it is text, an image, code, a film, music, or even a decision. And when cognitive cost tends to zero, differentiation based on it also tends to disappear.

Does this mean the end of humanity? I do not believe so.

It means that what sustained its value proposition within the current model may no longer make sense. If we take this line of reasoning to the extreme, some scenarios begin to emerge. They are not exactly comfortable:

- A world where human work loses so much value that the very need for large parts of the population no longer makes economic sense.

- A clear division of society, where one side holds capital, access to technology, and control over artificial intelligence systems, including big tech companies and global magnates, while on the other side, there is a mass that consumes but does not control, competing for relevance in a world where human effort is worth less and less.

- Another possibility would be fragmentation. Environments with controlled access, closed networks, a type of private and restricted internet, where access to artificial intelligence is limited or nonexistent.

It may sound exaggerated. But historically, big structural changes have rarely been linear or balanced. And honestly, the right question may not be which of these scenarios will happen. But which one has already begun.

And in the end, if the current model no longer makes sense, what will be the next one, and what level of importance will humans have within it.